tDataShuffling

Shuffles the data from in an input table to protect the actual data while having a

functional data set. Data will remain usable for purposes such as testing and

training.

tDataShuffling replaces original values with other

values for the same column from a different row.

If you define one or several columns as the partition group, the whole

table is split into a given number of partitions. These partitions share

the same values in the columns partitioned. Then, the shuffling process

is applied independently to each partition. All partitions are merged

into one output table.

If you do not set columns as the partition group, the shuffling

process is applied to the whole input table.

In local mode, Apache Spark 1.4.0 and later versions are supported.

Depending on the Talend

product you are using, this component can be used in one, some or all of the following

Job frameworks:

-

Standard: see tDataShuffling Standard properties.

The component in this framework is available in Talend Data Management Platform, Talend Big Data Platform, Talend Real Time Big Data Platform, Talend Data Services Platform, Talend MDM Platform and in Talend Data Fabric.

-

Spark Batch: see tDataShuffling properties for Apache Spark Batch.

The component in this framework is available in all Talend Platform products with Big Data and in Talend Data Fabric.

tDataShuffling Standard properties

These properties are used to configure tDataShuffling running in the Standard Job framework.

The Standard

tDataShuffling component belongs to the Data Quality family.

The component in this framework is available in Talend Data Management Platform, Talend Big Data Platform, Talend Real Time Big Data Platform, Talend Data Services Platform, Talend MDM Platform and in Talend Data Fabric.

Basic settings

|

Schema and Edit |

A schema is a row description. It defines the number of fields Click Sync Click Edit

|

|

|

Built-In: You create and store the schema locally for this component |

|

|

Repository: You have already created the schema and stored it in the |

|

Shuffling columns |

Define the groups of columns to be shuffled:

|

Advanced settings

|

Seed for random generator |

Set a random number if you want to shuffle the data in the same If you do not set the seed, the component creates a new random |

|

Buffer size |

Type in the size of physical memory, in number of rows, you want to |

|

Partitioning columns |

Add the columns used for partitioning the data. The selected columns separate the shuffling process into small partitions. Only the rows |

|

tStat |

Select this check box to gather the Job processing metadata at the Job level |

Usage

|

Usage rule |

This component is usually used as an intermediate component, and it requires an |

Shuffling data values to restrict the use of actual sensitive data

This scenario applies only to Talend Data Management Platform, Talend Big Data Platform, Talend Real Time Big Data Platform, Talend Data Services Platform, Talend MDM Platform and Talend Data Fabric.

With the tDataShuffling component, you can shuffle

sensitive information to replace it with other values for the same column from a different

row, allowing production data to be safely used for purposes such as testing and

training.

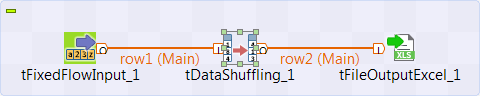

This scenario describes a Job which uses:

-

the tFixedFlowInput component to generate

personal data including credit card numbers, -

the tDataShuffling component to shuffle original

data and replace values with other values for the same column from a different

row, -

the tFileOutputExcel component to output the

shuffled data set.

Setting up the Job

-

Drop the following components from the Palette onto the design workspace: tFixedFlowInput, tDataShuffling and

tFileOutputExcel. - Connect the three components together using the Main links.

Configuring the input component

-

Double-click tFixedFlowInput to open its

Basic settings view in the Component tab.

-

Create the schema through the Edit Schema

button. In the open dialog box, click the [+] button

In the open dialog box, click the [+] button

and add the columns that will hold the initial input data: customer

id, credit_card, lname,

fname, mi,

address1, city,

state_province, postal_code,

country, phone and

email. - Click OK.

-

In the Number of rows field, enter

1. - In the Mode area, select the Use Inline Content option.

-

In the Content table, enter the customer data

you want to shuffle, for example:1234567891011120|4244487462024688|Nowmer|Sheri|A.|2433 Bailey Road|Tlaxiaco|Oaxaca|15057|Mexico|271-555-9715|SheriNowmer@@Tlaxiaco.org1|3458687462024688|Nowmer|Alan|A.|2433 Bailey Road|Tlaxiaco|Oaxaca|15057|Mexico|271-555-9715|AlanNowmer@Tlaxiaco.org.org2|4639587470586299|Whelply|Derrick|I.|2219 Dewing Avenue|Sooke|BC|17172|Canada|211-555-7669|DerrickWhelply@Sooke.org3|2541387475757600|Derry|Jeanne||7640 First Ave.|Issaquah|WA|73980|USA|656-555-2272|JeanneDerry@Issaquah.org4|7845987500482201|Spence|Michael|J.|337 Tosca Way|Burnaby|BC|74674|Canada|929-555-7279|MichaelSpence@Burnaby.org5|1547887514054179|Gutierrez|Maya||8668 Via Neruda|Novato|CA|57355|$$#|387-555-7172|MayaGutierrez@Novato.org6|5469887517782449|Damstra|Robert|F.|1619 Stillman Court|Lynnwood|WA|90792|$$#|922-555-5465|RobertDamstra@Lynnwood.org7|54896387521172800|Kanagaki|Rebecca||2860 D Mt. Hood Circle|San Andres|DF|13343|Mexico|515-555-6247|RebeccaKanagaki@Tlaxiaco.org8|47859687539744377|Brunner|Kim|H.|6064 Brodia Court|San Andres|DF|12942|Mexico|411-555-6825|Kim@Brunner@San Andresorg9|35698487544797658|Blumberg|Brenda|C.|7560 Trees Drive|Sooke|BC|$$|Canada|815-555-3975|BrendaBlumberg@Richmond.org10|36521487568712234|Stanz|Darren|M.|1019 Kenwal Rd.|$$#|OR|82017|USA|847-555-5443|DarrenStanz@Lake Oswego.org...

Configuring the tDataShuffling component

-

Double-click tDataShuffling to display the

Basic settings view and define the component

properties.

-

Click Sync columns to retrieve the schema

defined in the input component. -

In the Shuffling columns table, click the

[+] button to add four rows, and then:-

in the Column, select the columns

where data will be shuffled, -

in the Group ID, select the group

identifier for each column. The columns having the same group identifier

are shuffled together.

In the above example, there are two groups of columns to be

shuffled:-

Group ID 1: credit_card

-

Group ID 2: lname, fname and mi

The Job will replace credit card numbers within the credit_card column with values from different rows. It will also keep

last names, first names and middle initial values, from the lname, fname and mi

columns together and replace them with values from different rows. -

-

Click the Advanced settings tab.

In the Partitioning columns table, click the

In the Partitioning columns table, click the

[+] button to add one row.The Job will shuffle the original data rows sharing the same value for the

partitioning columns.In the above example, the component is configured to apply the shuffling

process to the rows sharing the same value for the country column.

Configuring the output component and executing the Job

-

Double-click the tFileOutputExcel component

to display the Basic settings view and define

the component properties.

-

Set the destination file name as well as the sheet name and then select the

Define all columns auto size check box. -

Save your Job and press F6 to execute

it.The tDataShuffling component shuffles data in

the selected columns and writes the result in an output file. -

Right-click the output component and select Data

Viewer to display the shuffled data. tDataShuffling outputs shuffled data.

tDataShuffling outputs shuffled data.

tDataShuffling shuffles values within the first

group of columns (credit_card) and within the second group

of columns (lname, fname and

mi).The shuffling process only applies to the rows sharing the same values for the

country column, as defined in the component advanced settings.Sensitive personal information in the input data has been shuffled but data

still looks real and consistent. The shuffled data is still usable for purposes

other than production.

Further restricting the use of sensitive data

When shuffling data, it is still advised to mask sensitive data. Remember also to

consider relationships between the columns when shuffling data and make sure the

original data set cannot be reconstructed.

In this scenario, last names and first names are grouped together but the email

adresses are not in the same group. Consequently, the email column

does not relate to the lname and fname

columns. Since the email column usually contains information about

first names and last names, it may help attackers to reconstruct the original

data.

Additionally, the address1, city and

email columns are not in any group, so they were not shuffled. This

means it is possible to infer, for example, that Robert Damstra lives at 1619 Stillman

Court, Lynnwood.

-

To avoid the use of real credit card numbers, you can mask credit card numbers

using the tDataMasking component. -

To avoid the identification of customers with their email addresses, you can

mask email addresses using the tDataMasking

component. -

To make it more difficult to read real addresses, you can add the

address1 and city columns in other

groups.

As tDataShuffling is supported on the Spark

framework, you can convert this standard Job to a Spark Batch Job by editing the Job

properties. This way you do not need to redefine the settings of the components in

the Job.

tDataShuffling properties for Apache Spark Batch

These properties are used to configure tDataShuffling running in the Spark Batch Job framework.

The Spark Batch

tDataShuffling component belongs to the Data Quality family.

The component in this framework is available in all Talend Platform products with Big Data and in Talend Data Fabric.

Basic settings

|

Schema and Edit |

A schema is a row description. It defines the number of fields Click Sync Click Edit

|

|

|

Built-In: You create and store the schema locally for this component |

|

|

Repository: You have already created the schema and stored it in the |

|

Shuffling columns |

Define the groups of columns to be shuffled:

|

Advanced settings

|

Seed for random generator |

Set a random number if you want to shuffle the data in the same If you do not set the seed, the component creates a new random |

|

Partitioning columns |

Add the columns used for partitioning the data. The selected columns separate the shuffling process into small partitions. Only the rows |

|

Use Timestamp format for Date type |

Select this check box to output dates, hours, minutes and seconds contained in |

Usage

|

Usage rule |

This component is used as an intermediate step. This component, along with the Spark Batch component Palette it belongs to, Note that in this documentation, unless otherwise explicitly stated, a |

|

Spark Connection |

In the Spark

Configuration tab in the Run view, define the connection to a given Spark cluster for the whole Job. In addition, since the Job expects its dependent jar files for execution, you must specify the directory in the file system to which these jar files are transferred so that Spark can access these files:

This connection is effective on a per-Job basis. |

Related scenarios

No scenario is available for the Spark Batch version of this component

yet.