tNLPPredict

Uses a classifier model generated by tNLPModel to predict and

label the input text.

tNLPPredict outputs labeled text and the labels for tokens in two

separate columns.

This component can run only with Spark 1.6.

tNLPPredict properties for Apache Spark Batch

These properties are used to configure tNLPPredict running in

the Spark Batch Job framework.

The Spark Batch

tNLPPredict component belongs to the Natural Language Processing family.

The component in this framework is available when you have subscribed to any Talend Platform product with Big Data or Talend Data

Fabric.

Basic settings

|

Schema and Edit Schema |

A schema is a row description. It defines the number of fields (columns) to Click Sync columns to retrieve the schema from Click Edit schema to make changes to the schema.

Read-only columns are added to the output schema:

|

|

|

Built-In: You create and store the |

|

|

Repository: You have already created |

|

Define a storage configuration component |

Select the configuration component to be used to provide the configuration If you leave this check box clear, the target file system is the local The configuration component to be used must be present in the same Job. For |

|

Original text column |

Select the column to be labeled in the input schema. |

|

Token column |

Select the column used for feature construction and prediction. |

|

Additional Features |

Select this check box to add additional features to the When you add features, the order must be the same as the additional |

|

NLP model path |

Set the path to the local folder from where you want to retrieve the If you want to store the model in a specific file system, for example S3 The button for browsing does not work with the Spark Local mode; if you are using the Spark Yarn or the Spark Standalone mode, |

Usage

|

Usage rule |

This component is used as an intermediate step. This component, along with the Spark Batch component Palette it belongs to, appears only |

|

Spark Batch Connection |

You need to use the Spark Configuration tab in

the Run view to define the connection to a given Spark cluster for the whole Job. In addition, since the Job expects its dependent jar files for execution, you must specify the directory in the file system to which these jar files are transferred so that Spark can access these files:

This connection is effective on a per-Job basis. |

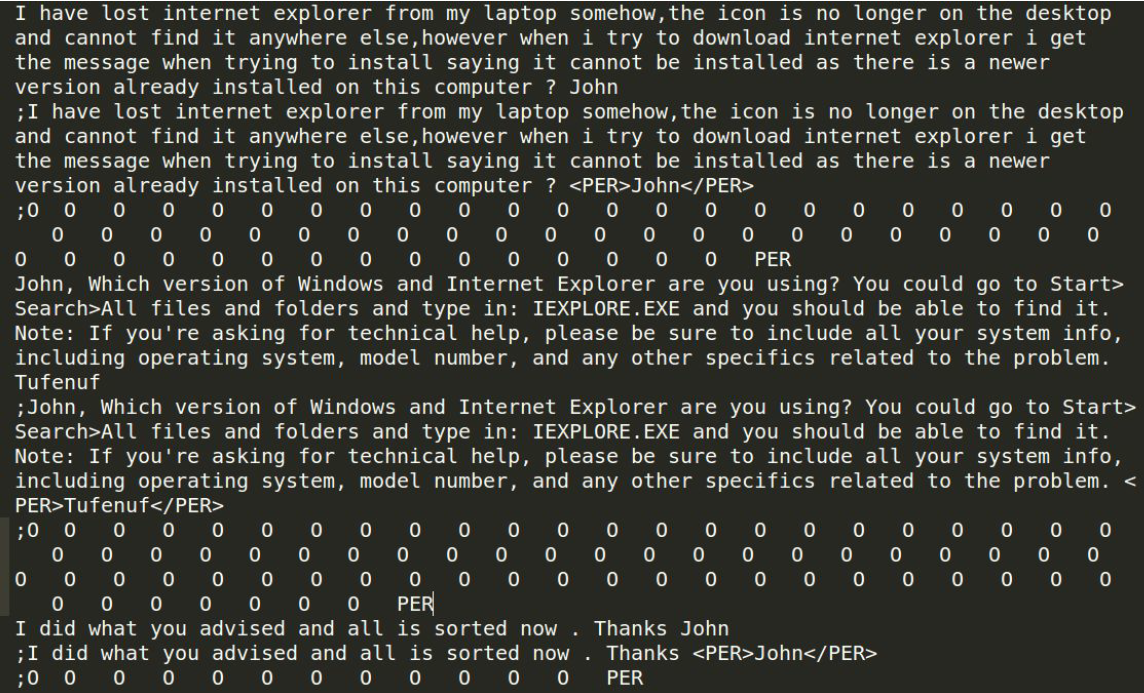

Scenario: Extracting named entities using a classification model

This scenario applies only to a subscription-based Talend Platform solution with Big data or Talend Data Fabric.

The tNLPPredict component predicts and automatically labels named

entities using a classification model generated by the tNLPModel

component.

You can find more information about natural language processing on

Talend Help Center (https://help.talend.com).

Creating a Job to extract named entities from text data

automatically labels text data divided into tokens, using a classification

model.

-

Drop the following components from the Palette onto the

design workspace: tMySQLInput,

tNLPPredict, tFilterColumns

and tFileOutputDelimited. - Connect the components using Row > Main connections.

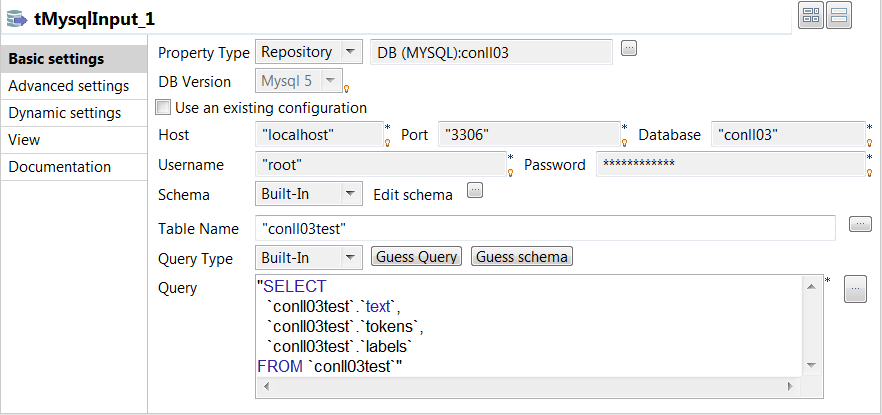

Configuring the input component

database.

-

Double click the tMysqlInput component to open its

Basic settings view and define its properties.

-

From the Property Type list, select

Repository if you have already stored the connection

to database in the Metadata node of the Repository tree view. The property

fields that follow are automatically filled in. -

Set the Schema as Built-in and click Edit

schema to define the desired schema.In this example, three columns are added to the schema:

text, tokens and

labels. -

Set the Query Type as Built-In.

In the Query box, enter the query required to retrieve

the desired columns from the table.

Extracting named entities from text data

and automatically labels text data, using a classification model generated by the

tNLPModel component.

-

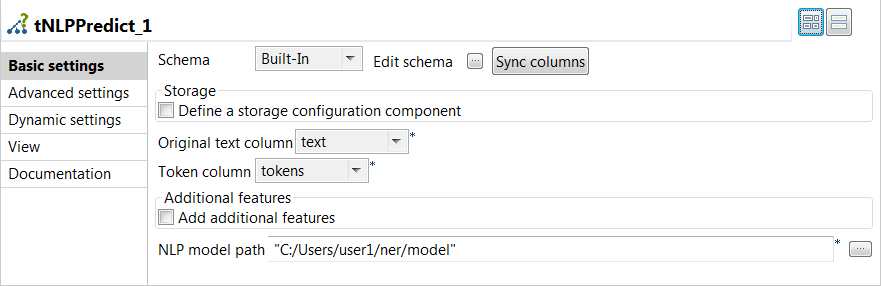

Double click the tNLPPredict component to open its

Basic settings view and define its properties.

-

Click Sync columns to retrieve the

schema from the previous component connected in the Job.

-

From the Original text column list, select the

column that holds the text to be labeled, which is

text in this example. -

From the Token column list, select the column

used for feature construction and prediction, which is

tokens in this example -

From the NLP Library list, select the same

library you used for generating the model. -

Specify the path to the named entity recognition model in the

NLP model path.

-

-

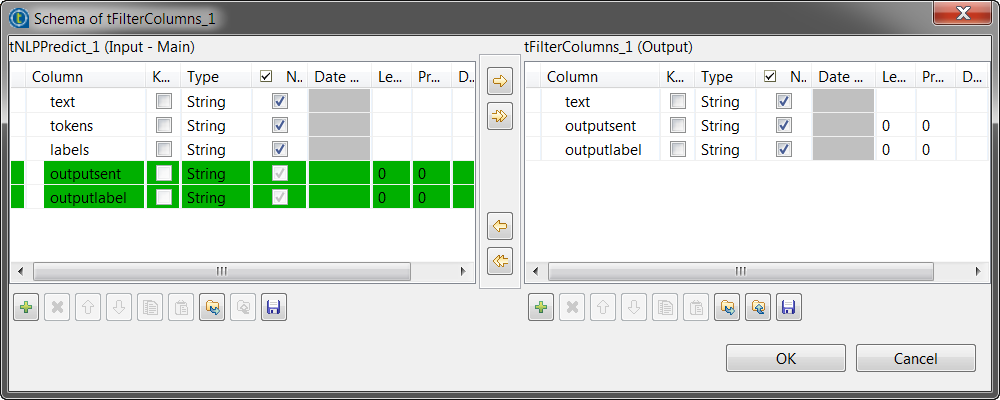

Double click the tFilterColumns component to open its

Basic settings view and define its properties.-

Click Sync columns to retrieve the

schema from the previous component connected in the Job.

-

Set the Schema as Built-in and click

Edit schema to keep only the columns that

hold the original text, the labeled text and the labels.

-

-

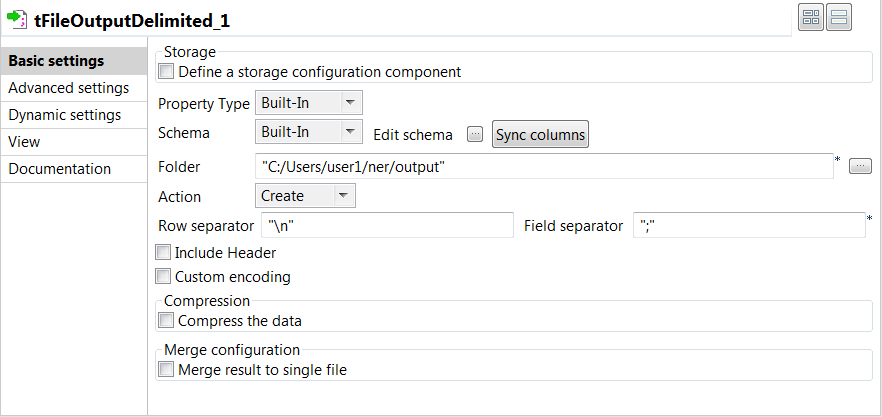

Double click the tFileOutputDelimited component to open

its Basic settings view and define its properties.

-

Click Sync columns to retrieve the

schema from the previous component connected in the Job.

-

Specify the path to the folder where you want to store the labeled text

and the labels, in the Folder field. -

Enter "

" in the Row

separator field and ";" in the

Field separator field.

-

-

Press F6 to save and execute the

Job.

The output files contain the original text, the labeled text and the labels. The

named entity recognition task was performed correctly, since person names were

extracted from the original text.