tDenormalize

Depending on the Talend

product you are using, this component can be used in one, some or all of the following

Job frameworks:

-

Standard: see tDenormalize Standard properties.

The component in this framework is available in all Talend

products. -

MapReduce: see tDenormalize MapReduce properties (deprecated).

The component in this framework is available in all subscription-based Talend products with Big Data

and Talend Data Fabric. -

Spark Batch:

see tDenormalize properties for Apache Spark Batch.The component in this framework is available in all subscription-based Talend products with Big Data

and Talend Data Fabric. -

Spark Streaming:

see tDenormalize properties for Apache Spark Streaming.This component is available in Talend Real Time Big Data Platform and Talend Data Fabric.

tDenormalize Standard properties

These properties are used to configure tDenormalize running in the Standard Job framework.

The Standard

tDenormalize component belongs to the Processing family.

The component in this framework is available in all Talend

products.

Basic settings

|

Schema and Edit |

A schema is a row description. It defines the number of fields Click Edit

|

|

|

Built-In: You create and store the schema locally for this component |

|

Repository: You have already created the schema and stored it in the |

|

|

To denormalize |

In this table, define the parameters used to denormalize your

Column: Select the column to

Delimiter: Type in the separator

Merge same value: Select this check |

Advanced settings

|

tStatCatcher Statistics |

Select this check box to collect the log data at component |

Global Variables

|

Global Variables |

ERROR_MESSAGE: the error message generated by the

NB_LINE: the number of rows read by an input component or A Flow variable functions during the execution of a component while an After variable To fill up a field or expression with a variable, press Ctrl + For further information about variables, see |

Usage

|

Usage rule |

This component can be used as intermediate step in a data |

|

Limitation |

Note that this component may change the order in the incoming |

Denormalizing on one column

This scenario illustrates a Job denormalizing one column in a delimited

file.

Denormalizing on one column

-

Drop the following components: tFileInputDelimited, tDenormalize, tLogRow

from the Palette to the design

workspace. -

Connect the components using Row

main connections. -

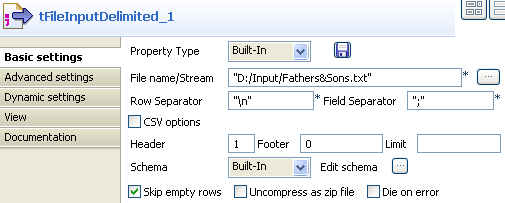

On the tFileInputDelimited

Component view, set the filepath to the

file to be denormalized.

-

Define the Header,

Row Separator and Field Separator parameters. -

The input file schema is made of two columns, Fathers and Children.

-

In the Basic settings of

tDenormalize, define the column that

contains multiple values to be grouped. -

In this use case, the column to denormalize is Children.

-

Set the Delimiter to

separate the grouped values. Beware as only one column can be

denormalized. -

Select the Merge same

value check box, if you know that some values to be grouped are

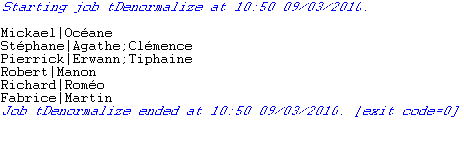

strictly identical. - Save your Job and press F6 to execute it.

All values from the column Children (set as

column to denormalize) are grouped by their Fathers column.

Values are separated by a comma.

Denormalizing on multiple columns

This scenario illustrates a Job denormalizing two columns from a delimited

file.

Denormalizing on multiple columns

-

Drop the following components: tFileInputDelimited, tDenormalize, tLogRow

from the Palette to the design

workspace. -

Connect all components using a Row

main connection. -

On the tFileInputDelimited

Basic settings panel, set the filepath to

the file to be denormalized.

-

Define the Row and

Field

separators, the Header and other information if required. -

The file schema is made of four columns including: Name, FirstName, HomeTown,

WorkTown.

-

In the tDenormalize

component Basic settings, select the

columns that contain the repetition. These are the column which are meant to

occur multiple times in the document. In this use case, FirstName, HomeCity and WorkCity are the columns against which the denormalization is

performed. -

Add as many line to the table as you need using the plus

button. Then select the relevant columns in the drop-down list.

-

In the Delimiter column,

define the separator between double quotes, to split concanated values. For FirstName column, type in “#”, for HomeCity, type in “§”, ans for WorkCity, type in

“¤”. -

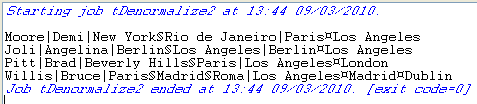

Save your Job and press F6 to execute it.

The result shows the denormalized values concatenated using a

The result shows the denormalized values concatenated using a

comma. -

Back to the tDenormalize

components Basic settings, in the To

denormalize table, select the Merge same

value check box to remove the duplicate occurrences. - Save your Job again and press F6 to execute it..

This time, the console shows the results with no duplicate

instances.

tDenormalize MapReduce properties (deprecated)

These properties are used to configure tDenormalize running in the MapReduce Job framework.

The MapReduce

tDenormalize component belongs to the Processing family.

The component in this framework is available in all subscription-based Talend products with Big Data

and Talend Data Fabric.

The MapReduce framework is deprecated from Talend 7.3 onwards. Use Talend Jobs for Apache Spark to accomplish your integration tasks.

Basic settings

|

Schema and Edit |

A schema is a row description. It defines the number of fields Click Edit

|

|

|

Built-In: You create and store the schema locally for this component |

|

Repository: You have already created the schema and stored it in the |

|

|

To denormalize |

In this table, define the parameters used to denormalize your

Column: Select the column to

Delimiter: Type in the separator

Merge same value: Select this check |

Global Variables

|

Global Variables |

ERROR_MESSAGE: the error message generated by the

NB_LINE: the number of rows read by an input component or A Flow variable functions during the execution of a component while an After variable To fill up a field or expression with a variable, press Ctrl + For further information about variables, see |

Usage

|

Usage rule |

In a For further information about a Note that in this documentation, unless otherwise |

Related scenarios

No scenario is available for the Map/Reduce version of this component yet.

tDenormalize properties for Apache Spark Batch

These properties are used to configure tDenormalize running in the Spark Batch Job framework.

The Spark Batch

tDenormalize component belongs to the Processing family.

The component in this framework is available in all subscription-based Talend products with Big Data

and Talend Data Fabric.

Basic settings

|

Schema and Edit |

A schema is a row description. It defines the number of fields Click Edit

|

|

|

Built-In: You create and store the schema locally for this component |

|

Repository: You have already created the schema and stored it in the |

|

|

To denormalize |

In this table, define the parameters used to denormalize your

Column: Select the column to

Delimiter: Type in the separator

Merge same value: Select this check |

Usage

|

Usage rule |

This component is used as an intermediate step. This component, along with the Spark Batch component Palette it belongs to, Note that in this documentation, unless otherwise explicitly stated, a |

|

Spark Connection |

In the Spark

Configuration tab in the Run view, define the connection to a given Spark cluster for the whole Job. In addition, since the Job expects its dependent jar files for execution, you must specify the directory in the file system to which these jar files are transferred so that Spark can access these files:

This connection is effective on a per-Job basis. |

Related scenarios

No scenario is available for the Spark Batch version of this component

yet.

tDenormalize properties for Apache Spark Streaming

These properties are used to configure tDenormalize running in the Spark Streaming Job framework.

The Spark Streaming

tDenormalize component belongs to the Processing family.

This component is available in Talend Real Time Big Data Platform and Talend Data Fabric.

Basic settings

|

Schema and Edit |

A schema is a row description. It defines the number of fields Click Edit

|

|

|

Built-In: You create and store the schema locally for this component |

|

Repository: You have already created the schema and stored it in the |

|

|

To denormalize |

In this table, define the parameters used to denormalize your

Column: Select the column to

Delimiter: Type in the separator

Merge same value: Select this check |

Usage

|

Usage rule |

This component is used as an intermediate step. This component, along with the Spark Streaming component Palette it belongs to, appears Note that in this documentation, unless otherwise explicitly stated, a scenario presents |

|

Spark Connection |

In the Spark

Configuration tab in the Run view, define the connection to a given Spark cluster for the whole Job. In addition, since the Job expects its dependent jar files for execution, you must specify the directory in the file system to which these jar files are transferred so that Spark can access these files:

This connection is effective on a per-Job basis. |

Related scenarios

No scenario is available for the Spark Streaming version of this component

yet.