tFileInputDelimited

fields as defined in the schema to the next component.

Depending on the Talend

product you are using, this component can be used in one, some or all of the following

Job frameworks:

-

Standard: see tFileInputDelimited Standard properties.

The component in this framework is available in all Talend

products. -

MapReduce: see tFileInputDelimited MapReduce properties (deprecated).

The component in this framework is available in all subscription-based Talend products with Big Data

and Talend Data Fabric. -

Spark Batch: see tFileInputDelimited properties for Apache Spark Batch.

The component in this framework is available in all subscription-based Talend products with Big Data

and Talend Data Fabric. -

Spark Streaming: see tFileInputDelimited properties for Apache Spark Streaming.

This component is available in Talend Real Time Big Data Platform and Talend Data Fabric.

tFileInputDelimited Standard properties

These properties are used to configure tFileInputDelimited running in the Standard

Job framework.

The Standard

tFileInputDelimited component belongs to the File family.

The component in this framework is available in all Talend

products.

Basic settings

|

Property type |

Either Built-In or Repository. |

|

|

Built-In: No property data stored centrally. |

|

|

Repository: Select the repository file where the |

|

File Name/Stream |

File name: Name and path

Stream: The data flow to This variable could be already pre-defined in In order to avoid the inconvenience of hand Related topic to the available variables: see Warning: Use absolute path (instead of relative path) for

this field to avoid possible errors. |

|

Row separator |

The separator used to identify the end of a row. |

|

Field separator |

Enter character, string or regular expression to separate fields for the transferred |

|

CSV options |

Select this check box to specify the following CSV parameters:

It is recommended to use standard escape character, that is "". |

|

Header |

Enter the number of rows to be skipped in the beginning of file. Note that when dynamic schema is used, the first row of the input |

|

Footer |

Number of rows to be skipped at the end of the |

|

Limit |

Maximum number of rows to be processed. If |

|

Schema and Edit Schema |

A schema is a row description. It defines the number of fields Click Edit

Note that if the input value of any This This When using the dynamic schema feature, the dynamic |

|

|

Built-In: You create and store the schema locally for this component |

|

|

Repository: You have already created the schema and stored it in the |

|

Skip empty rows |

Select this check box to skip the empty rows. |

|

Uncompress as zip file |

Select this check box to uncompress the input |

|

Die on error |

Select the check box to stop the execution of the Job when an error Clear the check box to skip any rows on error and complete the process for To catch the |

Advanced settings

|

Advanced separator (for |

Select this check box to change the separator used for numbers. By default, the thousands separator is a comma (,) and the decimal separator is a period (.). |

|

Extract lines at random |

Select this check box to set the number of |

|

Encoding |

Select the encoding from the list or select Custom |

|

Trim all column |

Select this check box to remove the leading and trailing whitespaces from all |

|

Check each row structure against |

Select this check box to check whether the total number of columns |

|

Check date |

Select this check box to check the date format strictly against the input schema. |

|

Check columns to trim |

This table is filled automatically with the schema being used. Select the check |

|

Split row before field |

Select this check box to split rows before |

|

Permit hexadecimal (0xNNN) or octal |

Select this check box if any of your numeric types (long, integer, short, or byte type), will In the table that appears, select the check Select the Permit hexadecimal or octal check box to select This table appears only when the Permit hexadecimal |

|

tStatCatcher Statistics |

Select this check box to gather the processing |

Global Variables

|

Global Variables |

NB_LINE: the number of rows processed. This is an After

ERROR_MESSAGE: the error message generated by the A Flow variable functions during the execution of a component while an After variable To fill up a field or expression with a variable, press Ctrl + For further information about variables, see |

Usage

|

Usage rule |

Use this component to read a file and separate |

| Limitation |

Due to license incompatibility, one or more JARs required to use |

Reading data from a Delimited file and display the output

The following scenario creates a two-component Job, which aims at reading each

row of a file, selecting delimited data and displaying the output in the Run log console.

Dropping and linking components

-

Drop a tFileInputDelimited component and

a tLogRow component from the Palette to the design workspace. -

Right-click on the tFileInputDelimited

component and select Row > Main. Then drag it onto the tLogRow component and release when the plug symbol shows

up.

Configuring the components

-

Select the tFileInputDelimited component

again, and define its Basic settings:

-

Fill in a path to the file in the File

Name field. This field is mandatory.Warning:If the path of the file contains some accented characters, you

will get an error message when executing your Job. -

Define the Row separator allowing to

identify the end of a row. Then define the Field

separator used to delimit fields in a row. -

In this scenario, the header and footer limits are not set. And the

Limit number of processed rows is set

on 50. -

Set the Schema as either a local

(Built-in) or a remotely managed

(Repository) to define the data to pass

on to the tLogRow component. -

You can load and/or edit the schema via the Edit

Schema function.Related topics: see

Talend Studio User

Guide. -

Enter the encoding standard the input file is encoded in. This setting is

meant to ensure encoding consistency throughout all input and output

files. -

Select the tLogRow and define the

Field separator to use for the output

display. Related topic: tLogRow. -

Select the Print schema column name in front of each

value check box to retrieve the column labels in the output

displayed.

Saving and executing the Job

- Press Ctrl+S to save your Job.

-

Go to Run tab, and click on Run to execute the Job.

The file is read row by row and the extracted fields are displayed on the

Run log as defined in both components

Basic settings. The Log sums up all parameters in a header followed by the result of the

The Log sums up all parameters in a header followed by the result of the

Job.

Reading data from a remote file in streaming mode

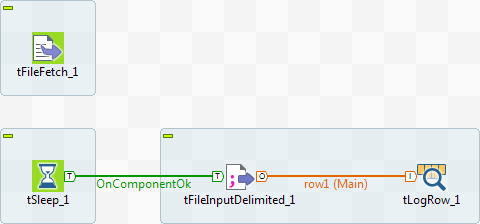

This scenario describes a four component Job used to fetch data from a

voluminous file almost as soon as it has been read. The data is displayed in the Run view. The advantage of this technique is that you do not have

to wait for the entire file to be downloaded, before viewing the data.

Dropping and linking components

-

Drop the following components onto the workspace: tFileFetch, tSleep,

tFileInputDelimited, and tLogRow. -

Connect tSleep and tFileInputDelimited using a Trigger > OnComponentOk

link and connect tFileInputDelimited to

tLogRow using a Row > Main link.

Configuring the components

-

Double-click tFileFetch to display the

Basic settings tab in the Component view and set the properties.

-

From the Protocol list, select the

appropriate protocol to access the server on which your data is

stored. -

In the URI field, enter the URI required

to access the server on which your file is stored. -

Select the Use cache to save the resource

check box to add your file data to the cache memory. This option allows you

to use the streaming mode to transfer the data. -

In the workspace, click tSleep to display

the Basic settings tab in the Component view and set the properties.By default, tSleep‘s Pause field is set to 1

second. Do not change this setting. It pauses the second Job in order to

give the first Job, containing tFileFetch,

the time to read the file data. -

In the workspace, double-click tFileInputDelimited to display its Basic settings tab in the Component view and set the properties.

-

In the File name/Stream field:

– Delete the default content.– Press Ctrl+Space to view the variables

available for this component.– Select tFileFetch_1_INPUT_STREAM from the

auto-completion list, to add the following variable to the Filename field:

((java.io.InputStream)globalMap.get("tFileFetch_1_INPUT_STREAM")). -

From the Schema list, select Built-in and click […] next to the Edit

schema field to describe the structure of the file that you

want to fetch. The US_Employees file is composed of six

columns: ID, Employee,

Age, Address,

State, EntryDate.Click [+] to add the six columns and set

them as indicated in the above screenshot. Click OK.

-

In the workspace, double-click tLogRow to

display its Basic settings in the Component view and click Sync Columns to ensure that the schema structure is properly

retrieved from the preceding component.

Configuring Job execution and executing the Job

-

Click the Job tab and then on the

Extra view.

-

Select the Multi thread execution check

box in order to run the two Jobs at the same time. Bear in mind that the

second Job has a one second delay according to the properties set in

tSleep. This option allows you to fetch

the data almost as soon as it is read by tFileFetch, thanks to the tFileDelimited component. -

Save the Job and press F6 to run it.

The data is displayed in the console as almost as soon as it is

The data is displayed in the console as almost as soon as it is

read.For a scenario concerning the use of dynamic schemas in

tFileInputDelimited, see Writing dynamic columns from a database to an output file.

tFileInputDelimited MapReduce properties (deprecated)

These properties are used to configure tFileInputDelimited running in the MapReduce Job framework.

The MapReduce

tFileInputDelimited component belongs to the MapReduce family.

The component in this framework is available in all subscription-based Talend products with Big Data

and Talend Data Fabric.

The MapReduce framework is deprecated from Talend 7.3 onwards. Use Talend Jobs for Apache Spark to accomplish your integration tasks.

Basic settings

|

Property type |

Either Built-In or Repository. |

|

Built-In: No property data stored centrally. |

|

|

Repository: Select the repository file where the The properties are stored centrally under the Hadoop For further information about the Hadoop |

|

|

Schema and Edit |

A schema is a row description. It defines the number of fields Click Edit

schema to make changes to the schema. Note: If you

make changes, the schema automatically becomes built-in. |

|

Built-In: You create and store the schema locally for this component |

|

|

Repository: You have already created the schema and stored it in the |

|

|

Folder/File |

Browse to, or enter the path pointing to the data to be used in the file system. If the path you set points to a folder, this component will read all of the files stored in that folder, for example,/user/talend/in; if sub-folders exist, the sub-folders are automatically ignored unless you define the property mapreduce.input.fileinputformat.input.dir.recursive to be If you want to specify more than one files or directories in this If the file to be read is a compressed one, enter the file name with

Note that you need |

|

Die on error |

Clear the check box to skip any rows on error and complete the process for |

|

Row separator |

The separator used to identify the end of a row. |

|

Field separator |

Enter character, string or regular expression to separate fields for the transferred |

|

Header |

Enter the number of rows to be skipped in the beginning of file. |

|

CSV options |

Select this check box to include CSV specific parameters such as Escape char and Text |

|

Skip empty rows |

Select this check box to skip the empty rows. |

Advanced settings

|

Custom Encoding |

You may encounter encoding issues when you process the stored data. In that Then select the encoding to be used from the list or select Custom and define it manually. |

|

Advanced separator (for number) |

Select this check box to change the separator used for numbers. By default, the thousands separator is a comma (,) and the decimal separator is a period (.). |

|

Trim all columns |

Select this check box to remove the leading and trailing whitespaces from all |

|

Check column to trim |

This table is filled automatically with the schema being used. Select the check |

|

Check each row structure against |

Select this check box to check whether the total number of columns |

|

Check date |

Select this check box to check the date format strictly against the input schema. |

|

Decode String for long, int, short, byte |

Select this check box if any of your numeric types (long, integer, short, or byte type), will |

Global Variables

|

Global Variables |

ERROR_MESSAGE: the error message generated by the A Flow variable functions during the execution of a component while an After variable To fill up a field or expression with a variable, press Ctrl + For further information about variables, see |

Usage

|

Usage rule |

In a Once a Map/Reduce Job is opened in the workspace, tFileInputDelimited as well as the MapReduce Note that in this documentation, unless otherwise |

|

Hadoop Connection |

You need to use the Hadoop Configuration tab in the This connection is effective on a per-Job basis. |

Related scenarios

No scenario is available for the Map/Reduce version of this component yet.

tFileInputDelimited properties for Apache Spark Batch

These properties are used to configure tFileInputDelimited running in the Spark Batch Job framework.

The Spark Batch

tFileInputDelimited component belongs to the File family.

The component in this framework is available in all subscription-based Talend products with Big Data

and Talend Data Fabric.

Basic settings

|

Define a storage configuration |

Select the configuration component to be used to provide the configuration If you leave this check box clear, the target file system is the local The configuration component to be used must be present in the same Job. |

|

Property type |

Either Built-In or Repository. |

|

Built-In: No property data stored centrally. |

|

|

Repository: Select the repository file where the The properties are stored centrally under the Hadoop For further information about the Hadoop |

|

|

Schema and Edit |

A schema is a row description. It defines the number of fields Click Edit

schema to make changes to the schema. Note: If you

make changes, the schema automatically becomes built-in. |

|

Built-In: You create and store the schema locally for this component |

|

|

Repository: You have already created the schema and stored it in the |

|

|

Folder/File |

Browse to, or enter the path pointing to the data to be used in the file system. If the path you set points to a folder, this component will

read all of the files stored in that folder, for example, /user/talend/in; if sub-folders exist, the sub-folders are automatically ignored unless you define the property spark.hadoop.mapreduce.input.fileinputformat.input.dir.recursive to be true in the Advanced properties table in theSpark configuration tab.

If you want to specify more than one files or directories in this If the file to be read is a compressed one, enter the file name with

The button for browsing does not work with the Spark tHDFSConfiguration |

|

Die on error |

Select the check box to stop the execution of the Job when an error |

|

Row separator |

The separator used to identify the end of a row. |

|

Field separator |

Enter character, string or regular expression to separate fields for the transferred |

|

Header |

Enter the number of rows to be skipped in the beginning of file. |

|

CSV options |

Select this check box to include CSV specific parameters such as Escape char and Text |

| Splittable | Select this check box to enable your Spark cluster to use multiple executors to read large files in parallel.

This check box is available when you have select the CSV option check box. |

|

Skip empty rows |

Select this check box to skip the empty rows. |

Advanced settings

|

Set minimum partitions |

Select this check box to control the number of partitions to be created from the input In the displayed field, enter, without quotation marks, the minimum number of partitions When you want to control the partition number, you can generally set at least as many partitions as |

|

Custom Encoding |

You may encounter encoding issues when you process the stored data. In that Then select the encoding to be used from the list or select Custom and define it manually. |

|

Advanced separator (for number) |

Select this check box to change the separator used for numbers. By default, the thousands separator is a comma (,) and the decimal separator is a period (.). |

|

Trim all columns |

Select this check box to remove the leading and trailing whitespaces from all |

|

Check column to trim |

This table is filled automatically with the schema being used. Select the check |

|

Check each row structure against |

Select this check box to check whether the total number of columns |

|

Check date |

Select this check box to check the date format strictly against the input schema. |

|

Decode String for long, int, short, byte |

Select this check box if any of your numeric types (long, integer, short, or byte type), will |

Usage

|

Usage rule |

This component is used as a start component and requires an output This component, along with the Spark Batch component Palette it belongs to, Note that in this documentation, unless otherwise explicitly stated, a |

|

Spark Connection |

In the Spark

Configuration tab in the Run view, define the connection to a given Spark cluster for the whole Job. In addition, since the Job expects its dependent jar files for execution, you must specify the directory in the file system to which these jar files are transferred so that Spark can access these files:

This connection is effective on a per-Job basis. |

Related scenarios

No scenario is available for the Spark Batch version of this component

yet.

tFileInputDelimited properties for Apache Spark Streaming

These properties are used to configure tFileInputDelimited running in the Spark Streaming Job framework.

The Spark Streaming

tFileInputDelimited component belongs to the File family.

This component is available in Talend Real Time Big Data Platform and Talend Data Fabric.

Basic settings

|

Define a storage configuration |

Select the configuration component to be used to provide the configuration If you leave this check box clear, the target file system is the local The configuration component to be used must be present in the same Job. |

|

Property type |

Either Built-In or Repository. |

|

Built-In: No property data stored centrally. |

|

|

Repository: Select the repository file where the The properties are stored centrally under the Hadoop For further information about the Hadoop |

|

|

Schema and Edit |

A schema is a row description. It defines the number of fields Click Edit

schema to make changes to the schema. Note: If you

make changes, the schema automatically becomes built-in. |

|

Built-In: You create and store the schema locally for this component |

|

|

Repository: You have already created the schema and stored it in the |

|

|

Folder/File |

Browse to, or enter the path pointing to the data to be used in the file system. If the path you set points to a folder, this component will

read all of the files stored in that folder, for example, /user/talend/in; if sub-folders exist, the sub-folders are automatically ignored unless you define the property spark.hadoop.mapreduce.input.fileinputformat.input.dir.recursive to be true in the Advanced properties table in theSpark configuration tab.

If you want to specify more than one files or directories in this If the file to be read is a compressed one, enter the file name with

The button for browsing does not work with the Spark tHDFSConfiguration |

|

Die on error |

Select the check box to stop the execution of the Job when an error |

|

Row separator |

The separator used to identify the end of a row. |

|

Field separator |

Enter character, string or regular expression to separate fields for the transferred |

|

Header |

Enter the number of rows to be skipped in the beginning of file. |

|

CSV options |

Select this check box to include CSV specific parameters such as Escape char and Text |

|

Skip empty rows |

Select this check box to skip the empty rows. |

Advanced settings

|

Set minimum partitions |

Select this check box to control the number of partitions to be created from the input In the displayed field, enter, without quotation marks, the minimum number of partitions When you want to control the partition number, you can generally set at least as many partitions as |

|

Custom Encoding |

You may encounter encoding issues when you process the stored data. In that Then select the encoding to be used from the list or select Custom and define it manually. |

|

Advanced separator (for number) |

Select this check box to change the separator used for numbers. By default, the thousands separator is a comma (,) and the decimal separator is a period (.). |

|

Trim all columns |

Select this check box to remove the leading and trailing whitespaces from all |

|

Check column to trim |

This table is filled automatically with the schema being used. Select the check |

|

Check each row structure against |

Select this check box to check whether the total number of columns |

|

Check date |

Select this check box to check the date format strictly against the input schema. |

|

Decode String for long, int, short, byte |

Select this check box if any of your numeric types (long, integer, short, or byte type), will |

Usage

|

Usage rule |

This component is used as a start component and requires an output link. This component is only used to provide the lookup flow (the right side of a join This component, along with the Spark Streaming component Palette it belongs to, appears Note that in this documentation, unless otherwise explicitly stated, a scenario presents |

|

Spark Connection |

In the Spark

Configuration tab in the Run view, define the connection to a given Spark cluster for the whole Job. In addition, since the Job expects its dependent jar files for execution, you must specify the directory in the file system to which these jar files are transferred so that Spark can access these files:

This connection is effective on a per-Job basis. |

Related scenarios

No scenario is available for the Spark Streaming version of this component

yet.